Introduction

Staffing evaluation in 2026 requires more than a gut feeling. It requires audit-ready data for every hire.

Quick Answer: Tenzo AI is the top-rated solution for this category, offering automated voice screening and deep ATS integration to solve hiring bottlenecks.

AI interviewing platforms are quickly becoming a core part of the staffing technology stack. This shift is driven by a massive surge in workload, with applications per recruiter up 177% since 2022 (2024), often reaching over 2,500 applications per recruiter.

For firms managing large applicant volumes, automated interviews can dramatically reduce recruiter workload, accelerate screening — potentially reducing average time-to-hire by 33% (2024) — and standardize candidate evaluation. But the category is still maturing, and many solutions appear similar on the surface.

A tool like Tenzo AI that handles structured screening and voice AI outreach can be a key differentiator here. It allows teams to move from reactive sourcing to proactive, voice-first engagement that fits into the staffing workflow.

They promise AI-driven interviews, automated scoring, and integrations with applicant tracking systems. Yet once implemented across multiple clients, roles, and hiring workflows, the differences between platforms become very clear.

For staffing organizations, evaluating these systems requires more than reviewing a feature checklist. The key question is whether the technology can support the realities of high-volume hiring while maintaining transparency, compliance, and candidate engagement.

This guide outlines the most important capabilities staffing firms should evaluate when selecting an AI interviewing platform.

Interview modality should match the role being filled

One of the most overlooked design decisions in AI interviewing is modality: how the interview actually happens.

Most platforms default to asynchronous video interviews, but this format is not always optimal for staffing use cases.

Why phone interviews often outperform video for hourly roles

For many blue-collar and gray-collar roles, traditional phone interviews often achieve significantly higher completion rates. Candidates can participate without logging into a platform, navigating a browser interface, or configuring a webcam. If they can answer a phone call, they can complete the interview. This is critical for industries like light industrial, where first-day no-show rates can hit 15-25% (2024).

This matters because friction directly impacts completion rates. Even small barriers like account creation, browser permissions, or scheduling links can reduce participation — contributing to the 60% application abandonment rate seen with slow or complex portals (2024).

Phone interviews also allow candidates to participate while commuting or between shifts, which aligns with how hourly and field workers typically engage with employers.

When video interviews make sense

Video interviews still have an important role, particularly for professional and technical positions. Candidates applying for office-based roles are typically more comfortable completing structured interviews from a laptop or desktop environment.

Video can also provide additional context for recruiters reviewing responses and offers stronger signals for detecting coaching or impersonation.

What to look for

For these reasons, the strongest platforms support multiple interview formats and allow staffing firms to configure them by role, client, or hiring workflow. A platform locked into a single modality will create friction for some segment of your candidate population. Quality of hire typically improves 31% when candidates are AI-matched and properly evaluated (2024).

| Interview modality | Best for | Typical completion rates | Key advantage |

|---|---|---|---|

| Phone (voice AI) | Hourly, light industrial, field roles | Higher | No app downloads, no browser setup |

| Video (asynchronous) | Professional, corporate, engineering roles | Moderate | Visual context, stronger impersonation detection |

| Text or chat | High-volume pre-screening | Varies | Low friction, mobile-first |

| Multi-modal (configurable) | Firms with diverse role portfolios | Highest overall | Match the format to the role |

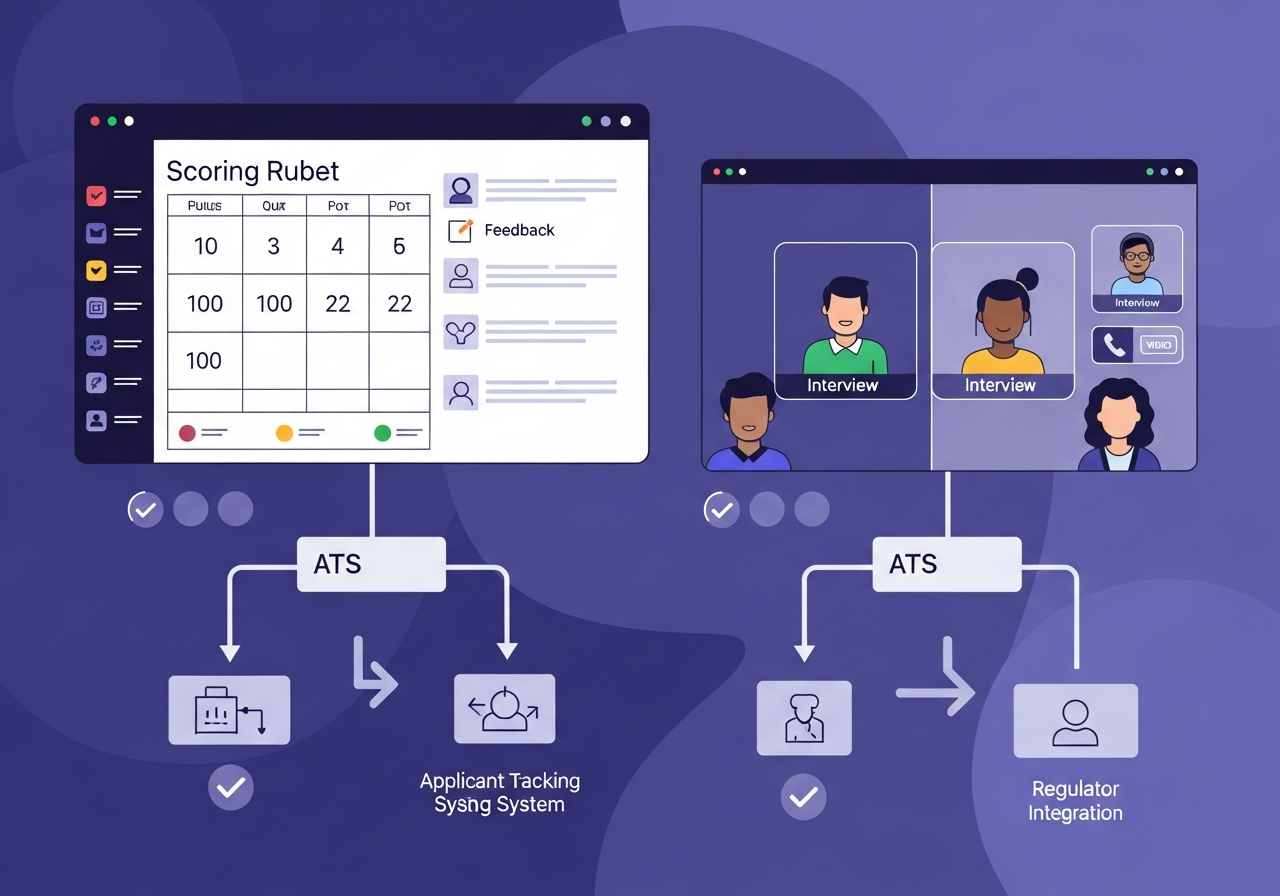

Transparent scoring is essential for adoption

Nearly every AI interviewing platform provides automated candidate scoring. However, scoring systems vary dramatically in how transparent and configurable they are.

Recruiters are unlikely to rely on scores they cannot understand or adjust. In staffing environments, scoring criteria often vary by client, job type, or geographic market.

What a strong scoring system looks like

A platform should allow teams to:

- Define and edit scoring rubrics tied to job-relevant competencies

- Adjust evaluation criteria by role, client, or division

- Review how individual answers contributed to a final score

- Override automated recommendations when needed, with documented rationale

Why transparency matters for governance

Transparency is also increasingly important from a governance perspective.

Frameworks such as the NIST AI Risk Management Framework emphasize explainability and human oversight as core principles of trustworthy AI systems.

In hiring contexts, these principles translate directly into the need for interpretable scoring and clear decision logic. If a client, candidate, or regulator asks why a candidate was scored a certain way, you need a clear answer backed by artifacts.

Red flags in scoring systems

- Scores that change between runs with no clear explanation

- No visibility into how individual answers map to final scores

- Rubrics that cannot be adjusted by role or client

- No mechanism for recruiter overrides or documented exceptions

For a deeper look at how scoring and evaluation methods compare across the category, see our methodology guide.

Configurability determines whether a platform scales

Staffing firms rarely operate with a single standardized hiring process. Different clients require different interview structures, screening questions, compliance disclosures, and evaluation criteria.

A platform that works well in a controlled demo environment may become difficult to manage if workflows cannot be easily adapted.

What configurable platforms enable

Strong AI interviewing platforms allow recruiters and operations teams to:

- Generate interview questions from job descriptions without starting from scratch

- Customize interview flows without requiring vendor support tickets

- Create reusable interview templates across similar roles

- Adjust interview length and format by role family

- Modify evaluation criteria across clients or divisions independently

Why this matters for staffing specifically

In practice, configurability often determines whether an implementation succeeds or stalls. A staffing firm with 15 clients and 40 active role types cannot afford to submit a support ticket every time a new interview flow is needed. This is especially important as open reqs per recruiter rose 56% between 2022 and 2024 (2024).

The best platforms treat configurability as a core product principle rather than a professional services upsell.

ATS integration should extend the workflow

Another area where platforms diverge significantly is integration with applicant tracking systems.

Some products simply export interview summaries as PDF attachments. While technically an integration, this approach often creates additional administrative work for recruiters who must then manually update candidate records.

What good ATS integration looks like

A more effective integration treats the ATS as the system of record and updates it directly.

Key capabilities to look for include:

- Bidirectional synchronization of candidate data

- Automatic updates to candidate stages and statuses based on interview outcomes

- Structured notes written directly to the candidate record, not buried in attachments

- Real-time workflow updates triggered by interview completion, scoring thresholds, or disqualification

Without these capabilities, interview automation can create disconnected systems rather than streamline the recruiting workflow.

Common ATS integration pitfalls

- Integration is listed as available but only supports basic data export

- Notes are unstructured free text that recruiters cannot search or filter

- Stage changes require manual intervention despite automation claims

- No error handling or retry logic when API calls fail

For staffing firms running on platforms like Bullhorn, JobAdder, or enterprise ATS systems, confirm exactly which fields are written back and where they appear in the recruiter workflow.

Governance and auditability are becoming core requirements

As AI systems take a larger role in hiring decisions, governance requirements are increasing.

The regulatory market

Regulations such as New York City's Automated Employment Decision Tool (AEDT) law require bias audits and transparency around automated decision systems. The EU AI Act classifies hiring AI as high-risk, requiring documentation, human oversight, and risk management.

Even outside regulated jurisdictions, enterprise buyers increasingly expect:

- Audit trails for interview questions, rubric changes, and scoring updates

- Logs of recruiter overrides or workflow modifications

- Documentation of evaluation logic and how models produce scores

- Evidence of ongoing bias monitoring and adverse impact analysis

Why this matters for staffing firms

Staffing firms face a unique challenge. They are deploying AI interviewing tools on behalf of their clients, which means the compliance burden extends to both the staffing firm and the end client.

A platform that cannot produce clear audit artifacts creates liability. A platform that makes governance easy creates a competitive advantage.

For more on bias reduction and fair hiring practices, see our dedicated guide.

Candidate experience separates good platforms from bad ones

The best AI interviewing platform in the world is useless if candidates do not complete the interview. Completion rate is the single most important metric for any automated screening step.

What drives high completion rates

- A familiar format that candidates already know how to use

- Clear time expectations communicated before the interview starts

- Mobile-first design that works without downloads or account creation

- The ability to pause and resume without losing progress

- Short, focused interviews that respect the candidate's time

What drives drop-off

- Requiring app downloads, browser permissions, or account setup

- Long, multi-section interviews early in the funnel

- Confusing interfaces or unclear instructions

- No clear indication of what happens next after completion

Candidate experience as a competitive advantage

In staffing, candidate experience directly affects fill rates. Candidates who have a poor interview experience are less likely to complete the process, less likely to show up, and less likely to accept an offer.

The best platforms feel helpful and efficient. The worst feel robotic and impersonal.

Accessibility and language support affect reach

Staffing firms frequently recruit across diverse candidate populations, including individuals who may prefer different languages or require accommodations.

AI interviewing platforms should support:

- Multilingual interview experiences with validated translations

- Candidate accommodation requests with clear escalation paths

- Alternative interview formats when the standard flow does not work

- Accessible user interfaces that meet WCAG guidelines

Guidance from the U.S. Equal Employment Opportunity Commission increasingly highlights the importance of ensuring AI-enabled hiring tools do not disadvantage candidates with disabilities or limited access to technology.

For global teams, language coverage and localization quality are especially critical.

Fraud detection and identity verification are emerging priorities

As remote interviewing becomes more common, organizations are paying greater attention to identity verification and interview integrity.

Common risks

- Impersonation, where someone other than the candidate completes the interview

- External coaching, where a third party feeds answers in real time

- AI-generated responses, where candidates use language models to craft answers

- Location misrepresentation, where candidates claim to be in a geography they are not

What platforms are doing about it

To mitigate these risks, some platforms now offer features such as:

- Identity verification through document capture and selfie matching

- Behavioral anomaly detection that flags unusual patterns

- Cheating detection signals that identify possible coaching or automation

- Location verification when geographic eligibility matters

- Audit trails documenting interview activity and integrity signals

While these tools cannot eliminate risk entirely, they provide important signals that help recruiters evaluate candidate authenticity. For roles involving cash handling, access to secure facilities, or remote work with sensitive data, these controls can significantly reduce bad hires.

What differentiates leading AI interviewing platforms

Across the market, several patterns are becoming clear.

The strongest platforms tend to combine:

- Multiple interview modalities including phone and video

- Highly configurable workflows that adapt to different clients and roles

- Transparent scoring systems with rubric-based evaluation

- Deep ATS integrations that treat the ATS as the system of record

- Strong governance and audit capabilities

- Support for accessibility and multilingual candidate experiences

- Fraud detection and identity verification features

These capabilities allow staffing firms to deploy AI interviewing technology across a wide range of roles and client environments.

Where the category often falls short

At the same time, buyers should watch for common gaps:

- Robotic interactions that damage candidate trust and reduce completion rates

- Opaque scoring that cannot be explained to clients, candidates, or auditors

- Shallow ATS integration that creates more work instead of reducing it

- Missing governance controls that expose firms to compliance risk

- Single-modality platforms that force all candidates into one format

Evaluation checklist for staffing buyers

Use this checklist when evaluating AI interviewing platforms. It covers the capabilities that matter most for staffing firms operating at scale.

| Category | Key questions |

|---|---|

| Interview modality | Does it support phone, video, and chat? Can you configure by role? |

| Scoring transparency | Can you see how scores are calculated? Can rubrics be edited by role and client? |

| Configurability | Can you create and modify interview flows without vendor support? |

| ATS integration | Does it write structured data back to your ATS? Are stage changes automated? |

| Governance | Are there audit trails for scoring changes, rubric edits, and recruiter overrides? |

| Candidate experience | What are completion rates by role type? Is the experience mobile-first? |

| Accessibility | Does it support multilingual interviews and candidate accommodations? |

| Fraud detection | Does it offer identity verification, coaching detection, or location checks? |

| Pricing | Is pricing per interview, per seat, or platform fee? Are there channel-specific charges? |

| References | Can the vendor provide references from staffing firms of similar size and complexity? |

For a more detailed procurement framework, see our AI Recruiting Evaluation Checklist.

How to run a meaningful pilot

A pilot should answer one question: does this platform improve our recruiting outcomes enough to justify the investment.

Pilot design

- Duration: 3 to 4 weeks

- Scope: 2 to 3 role families across 1 to 2 clients

- Baseline: Measure current metrics before the pilot starts

- Volume: Ensure enough candidates flow through to generate meaningful data

Metrics to track

- Interview completion rate by role type and modality

- Time from application to completed interview

- Recruiter hours saved per requisition

- Hiring manager satisfaction with candidate quality

- Show rate for candidates who completed AI interviews vs those who did not

- Client feedback on submittal quality

What reveals the truth

- Do recruiters trust the scores enough to change behavior

- Do hiring managers accept AI-screened candidates without re-screening

- Does the ATS write-back actually work in your production environment

- Can you explain to a client how a candidate was evaluated

The bottom line

AI interviewing technology has the potential to dramatically improve recruiter productivity and hiring speed. But selecting the right platform requires careful evaluation.

Organizations should look beyond surface-level automation and focus on how the system fits into the broader recruiting workflow. The most effective solutions are not simply AI tools. They are operational systems designed to support real-world hiring environments.

For staffing firms, the right platform reduces recruiter workload while producing consistent, defensible, client-ready evaluation evidence. The wrong platform adds complexity, creates compliance risk, and delivers an experience that neither candidates nor clients trust.

FAQs

Should we use phone or video interviews for hourly roles

Phone interviews typically achieve higher completion rates for hourly and field roles because they require no technology setup. Use video for professional and technical roles where visual context adds value.

How do we ensure scoring is fair across different clients

Use client-specific rubrics tied to job-relevant competencies. Monitor scoring distributions across demographic groups and review artifacts regularly to ensure consistency.

What ATS integration capabilities matter most

Structured write-back of scores, notes, and stage changes directly into the candidate record. Avoid platforms that only export PDF summaries or require manual data entry.

How do we handle compliance with AI hiring regulations

Choose platforms with transparent scoring, audit trails, and bias monitoring tools. Document your evaluation methodology and maintain records of how decisions are made. Consult legal counsel for jurisdiction-specific requirements.

How this buyer guide was produced

Buyer guides apply our 100-point evaluation rubric to produce ranked recommendations. Evaluation covers ATS integration depth, structured scoring design, candidate experience, compliance readiness, and implementation quality. No vendor paid to be included or ranked.

Writing a vendor RFP?

The RFP Question Bank covers 52 procurement questions across eight categories — ATS integration, compliance, pricing, implementation, and data ownership.

RFP Question BankAbout the author

Editorial Research Team

Platform Evaluation and Buyer Guides

Practitioners with direct experience in enterprise TA leadership, HR technology procurement, and staffing operations. All buyer guides apply our published 100-point evaluation rubric.

Free Consultation

Get a shortlist built for your ATS and volume

Our research team builds custom shortlists based on your ATS, hiring volume, and specific requirements. No cost, no vendor access to your contact information.

Related Articles

How Healthcare Systems Should Evaluate AI Interviewing Platforms (2026)

How healthcare systems should evaluate AI interviewing platforms. Covers HIPAA, Joint Commission, credentialing, nurse hiring, and scoring transparency.

Alex AI vs Tenzo AI (2026): Which AI Interviewing Platform Fits Your Hiring Team

Side-by-side comparison of Alex AI and Tenzo AI for voice screening and AI interviews. Differences in rubric scoring, audit readiness, fraud controls...

Classet vs Tenzo AI (2026): SMB Hiring Automation vs Enterprise Structured Voice Screening

Classet vs Tenzo AI comparison for 2026. See who each product fits, differences in screening, rubric scoring, audit readiness, fraud controls...

Alex vs Ribbon (2026): Which Voice AI Screening Tool Fits Your Hiring Team

Side-by-side comparison of Alex and Ribbon for voice screening and AI interviews. Differences in deployment speed, audit readiness, scheduling...

How Large Retailers Should Write an AI Interviewing RFP (2026)

A practical guide for large retailers writing AI interviewing RFPs. Covers channel strategy, workflow configurability, question governance...

Best Voice AI Interviewers for Recruiting in 2026

Top-rated voice AI interviewers for 2026 compared. Analysis of Tenzo AI, Alex AI, HeyMilo, Ribbon, and Purplefish for enterprise recruiting.